Instrument

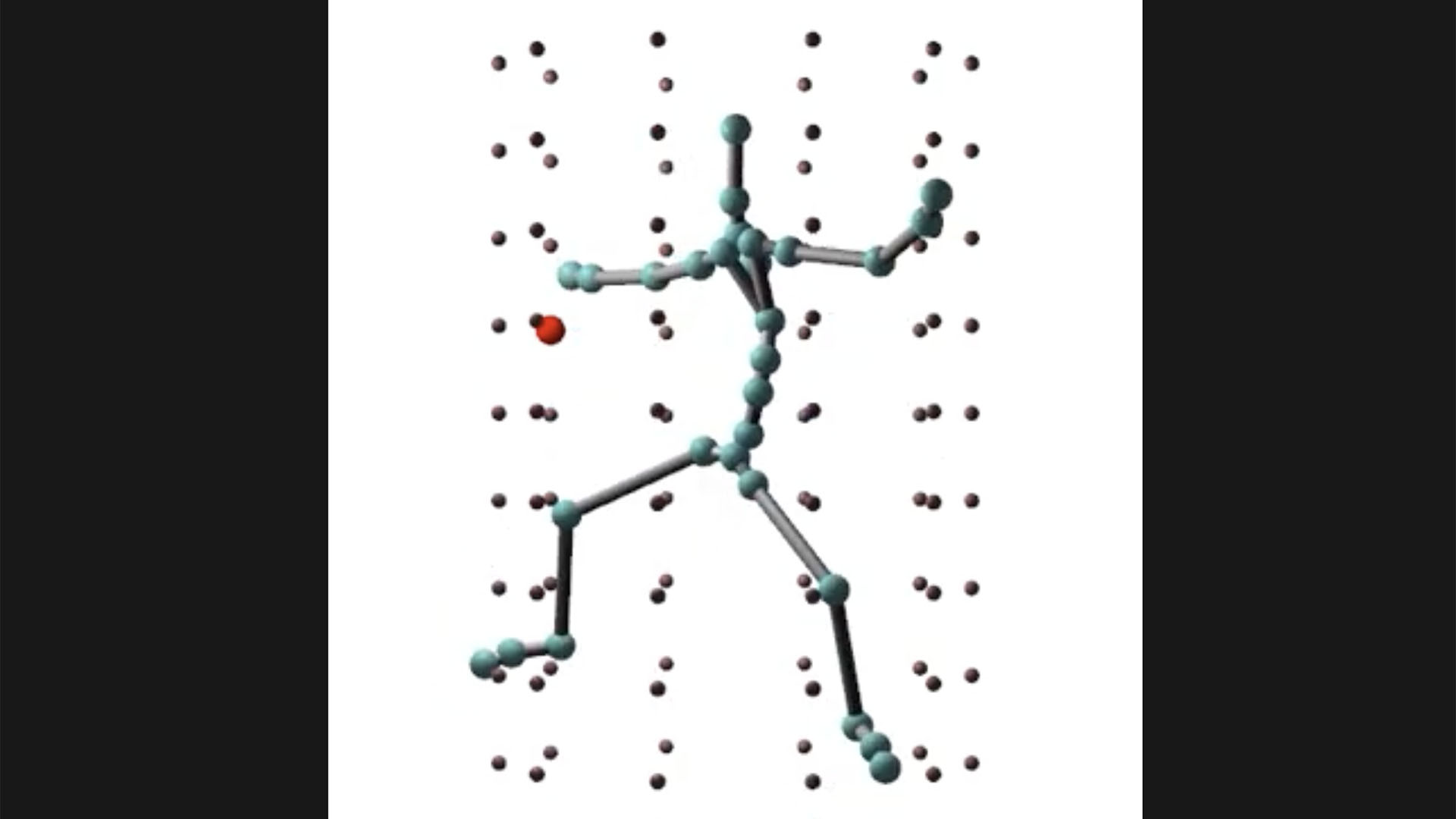

The avatar played the digital instrument by approaching the instrument's model synthesis points and thereby exciting them. The playing was indirectly controlled by varying the position of a target point which the avatar tried to reach with its right hand. The combination of changing the target point's position and the orientation of one avatar joint causes the GranularDance machine learning model to generate motions that repeatedly excite the sound synthesis points.

Several experiments have been conducted during which the source motions for the avatar are chosen from short excerpts of the original mocap recording. This causes the avatar to exhibit partially periodic motions which in turn manifest as recurring melodic patterns in the acoustic output.

Interaction

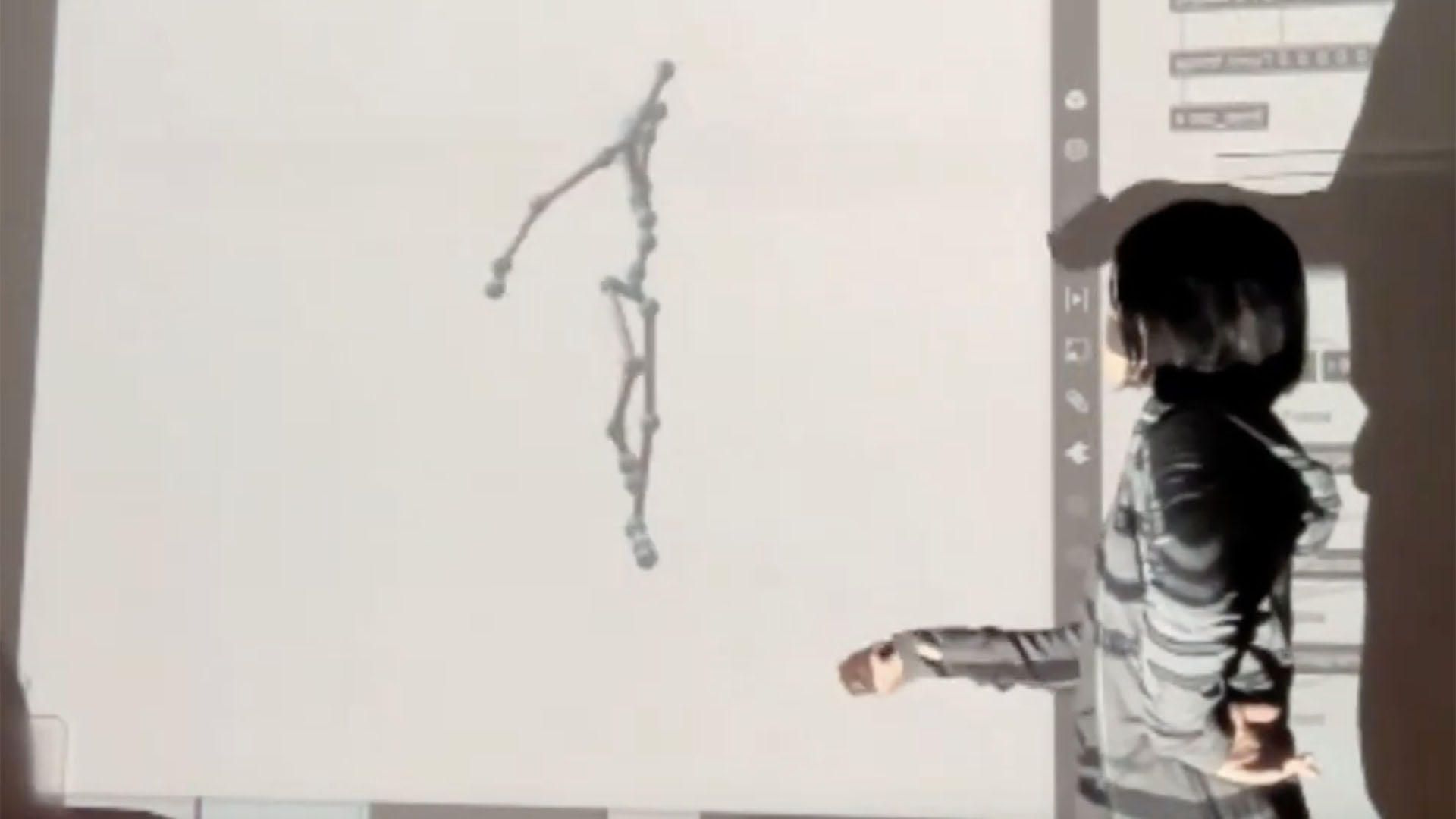

During several rehearsals, the dancer Emi Miyoshi explored her interaction with the avatar. Interaction involved the mapping of the orientation of one joint of the dancer to the orientation of one joint of the avatar. The remaining joints of the avatar were controlled by the previously trained GranularDance machine learning model.

Different aspects of the interaction were varied. The dancer experimented with different placements of the inertial measurement unit on her body. Also, the joint of the avatar that was interactively controlled and the number of iterations over which the control was applied to the avatar was changed. Lastly, the position and length of the excerpt of the motion capture recording from which the avatar synthesised its motions was varied.

How did you choose the music?